Thanks for being here!

Announcement(s):

#1 - Our transaction data is now available at more granular “row level”.

#2 - Now have single merchant gift card data available. Yes, really!

Contact me to learn more.

Theme that emerged in this week’s email is … the importance of open data cannot be overstated … but the issue of data quality not going away …

QUOTES

“Tulchinsky (CEO of WorldQuant) believes there can be little doubt that data is set to become one of the most valuable commodities in this new information age. He further believes harnessing the abundant potential of data promises to create value on an unimaginable scale, while simultaneously unleashing a new era of global innovation.”

News Articles

Podcasts

Cool Charts

Final Thoughts (tools)

#1 – Seattle Data Guy published Looking Past Data Infrastructure - How To Deliver Value With Data. February 2023.

My Take: I’ve highlighted a number of Seattle Data Guy’s articles in recent weeks. If you are in the data space, you should be on his SubStack list. This article is a summary of his interview with Gordon Wong, former business intelligence and data engineering VP at both HubSpot and Fitbit. There is a TON of good stuff in this article. Two things resonated with me:

First, the story of the two companies, #1 picked Hadoop and #2 SnowFlake. SnowFlake proved to be the better tool and company #2 was in a much better position based on that choice of tool. See below for my Final Thoughts about the importance of picking the right tools.

The second item that resonated with me is to know who your customers are, what they care about, and how well you are helping them now. This may seem obvious, but in practice, it is not.

#2 – Girard Miller from Governing published More and Better Uses Ahead for Governments’ Financial Data. January 2023.

My Take: Transparency is good. The Financial Data Transparency Act (FDTA) that is now a Federal Law should take steps in that direction. Of course, the devil is in the details and I would not expect any seismic shifts in our day-to-day lives, but this is a step in the right direction. The analogy used in the article is that if 4,000 publicly traded US companies can produce quarterly filings that are roughly standardized, why can’t the 50 states and 1,000 largest cities/counties do the same.

It will be interesting to watch who pushes back on what … that is surely the easiest way to find the most valuable data…because if someone has found a diamond in the deluge of government data … they will surely not want that to become more accessible.

A good summary of the Act can be found here (Thanks Jenna Magan, Hoang Vu, and James Hernandez of Orrick).

#3 – Michael Mayhew of Eagle Alpha published A Guide to Getting Started with Open Data. February 2023.

My Take: Related to the above FDTA article, the importance of open data is highlighted here as well. As is echoed in Alex Izydorczyk’s article highlighted below, “It is important to note that vendors from other alternative data categories also actively use open data sources to improve their product offerings.” This open data provides a ground truth to which you can compare other data sources to determine which lead vs lag, or frankly, which data provides no unique insight at all.

BONUS: Alex Izydorczyk published Free as in freedom, not as in beer. February 2023. This relates to both the Eagle Alpha’s Open Data & the FDTA articles highlighted above. There is huge value in effectively engaging with publicly available data. And as Alex says…

“…these data are a prerequisite for making meaningful use of proprietary, external data sources.”

BONUS 2: TechJury published 25+ Impressive Big Data Statistics for 2023. February 2023.

BONUS 3: WorldQuant published The future will be here faster than you think on CNBC. January 2023. See Tulchinsky quote above.

#1 – Tobias Macey of The Data Engineering Podcast published Reflecting On The Past 6 Years Of Data Engineering. February 2023.

My Take: I’ve learned a lot from listening to this podcast. This provides a good overview of the past six years and the massive changes that have occurred in this space. There is A LOT of information packed into his 30 minutes. Of most interest to me was the importance of meta data and the term “active metadata” … which is really just telling people what they’ve got. Sounds like that might be easy, but it’s not.

Highlights (32-minute run time):

Minute 01:00 – background on podcast (since 2017).

Minute 03:00 – data engineering role came about because data scientists needed better data to do their job; commentary on Hadoop.

Minute 06:30 – scaling problems with streaming data; data lakes.

Minute 08:00 – Era of data catalogs; now evolved into data discovery and importance of meta data.

Minute 09:30 – importance of Orchestrations engines (task based…now more aware of what and why (which is needed).

Minute 11:00 – Cloud data warehouses is the big change in last 5 years (Redshift, BigQuery, Snowflake). This has become a junction of everything.

Minute 12:30 – shifted conversation from ETL into ELT. Can be more agile, also to build on top of successive iterations. dBT has also been “transformational”.

Minute 14:15 – Data lakes still in the conversation. Data lake house offers Benefits of scalability of data lake but with better interfaces.

Minute 15:15 – Working with data now has lower barrier to entry. Allows people in the space to work on higher order concerns. Repeatable. Stable. Robust. Know when things fail.

Minute 18:30 – Meta data starting to coalesce into rolling that can encapsulate concerns around quality and lineage. Understand how data is being used. “Active metadata”.

Minute 21:30 – embedded analytics; how are people engaging with the data? How to surface new insights?

Minute 23:00 – “Complete the cycle of data” … what is next action? Allows you to continue to iterate and improve.

Minute 24:30 – looking forward: “ML today is where data engineering was 6 years ago.” Next step is operational-ization of ML.

Minute 27:45 – lessons learned from running the podcast. Challenge of what is valuable and keeping up with all the change. Looking to add more engagement with audience.

#2 – Matthew Bernath’s Financial Modeling Podcast published Transforming Financial Services with Data Analytics. January 2022 (this is > 1 year old, but I thought still interesting).

My Take: Really have enjoyed getting to know Matthew over the past few months and look forward to more podcast episodes in the future!

Highlights (15-minute run time):

Minute 01:00 – intro

Minute 02:00 – banking industry challenges that can be addressed with AI; 80% of banks think AI can help them address challenges.

Minute 02:30 – what AI is all about

Minute 04:00 – why now?

Minute 05:45 – challenges and risks to implementing AI

Minute 07:30 – discussion of AI use cases (most interesting: Uber example at 8:30 about onboarding new customers at scale)

Source: Precisely published Data Quality 101: Build Business Value with Data You Can Trust

I like this one … when talking data quality, I usually use the “Three Cs”: Complete. Consistent. Clean. …but this is even better.

How to get better:

Precisely also published an eBook on Data Governance 101.

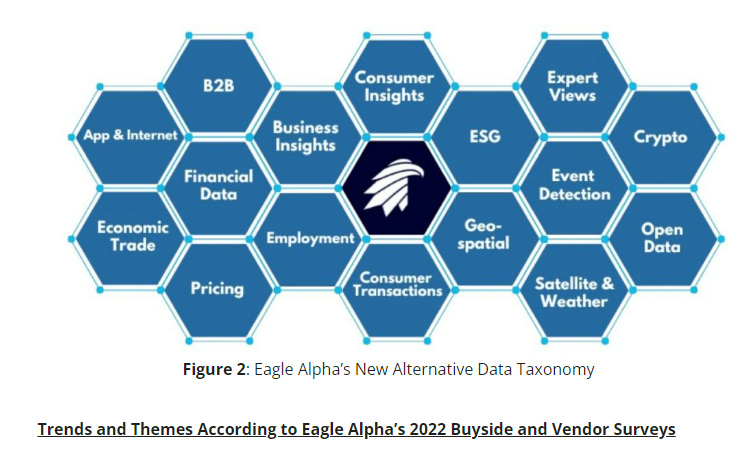

BONUS: Eagle Alpha’s update alternative data taxonomy of 16 primary categories and 56 subcategories:

Source: My brain.

Tools.

My career is spanning an interesting time in history. My first “real job” was in 1995. I graduated from college without an email address. I didn’t have any mobile phone, much less a smartphone, until 1998. Even when I was fully hooked on email, until my first BlackBerry in 2004, all trips out of the office were made without any connectivity. I’d return to a bunch of unread emails (and likely a pile of coiled up faxes too). Unthinkable today.

The tools available to people today are hugely beneficial, but deciding where to focus can be tough.

Picking the right tools is essential.

Seeking some feedback here: what are the best tools being used today to save time & energy to make you and your teams more productive? I’ll share any responses next week & on the socials.

Time savers:

The combination of Doodle, Calendly, and Zoom saves dozens of emails trying to get meetings organized.

Operational:

My business would not be possible without AWS, SnowFlake, and Tableau. Data flows seamlessly through the pipes and I can deliver to clients in their preferred format.

Managing networks:

There was a time I had all my contacts in excel. Now with a good CRM, social media (mostly Twitter & LinkedIn for me), Slack, and SubStack, there are myriad ways of managing & engaging with your professional network, both internal to your company & external. I am curious to check out new tools like Gong to improve efficiency of the sales team.

What frustrates me as inefficient:

Filling out dozens of similar DDQs (due diligence questionnaires).

Keeping my CRM up-to-date, I try to enter new info to keep it fresh, but it feels like this should be easier.