Thanks for being here!

This is the Alternative Data Weekly for Friday March 1, 2024.

Announcement(s):

Babel Street Data (formerly known as Vertical Knowledge) recently launched a new flights refined dataset containing a breadth & level of granularity that exceeds anything I’ve seen. See Final Thoughts below.

Theme that emerged in this week’s email is … How to get trust without transparency & the related topic the importance of data governance.

QUOTES

“The data market, more than most, needs standardization” – Nomad Data

News Articles

Podcasts

Cool Charts

Final Thoughts (come fly away)

#1 – Nomad Data published The Rise and Stall of Data Marketplaces: A Critical Look. February 2024.

My Take: I’ve been a skeptic of data marketplaces. I’m envious of how well Nomad has articulated the problem. There is a ton of complexity on both the buy-side and sell-side of a data marketplace. What little success data marketplaces have seen is in a siloed vertical market, where both buyers & sellers can really focus. Even then it is difficult from the buyer perspective to really understand what a data vendor is marketing. The data vendor is struggling to customize their message to hundreds of different buyer personas. This all results in significant inefficiency and has really slowed the overall growth of the data market in recent years. Good to see companies like Nomad addressing the issue.

#2 – Timothy B Lee & James Grimmelmann of UnderstandingAI published Why The New York Times might win its copyright lawsuit against OpenAI. February 2024.

My Take: Most articles I’ve read are dismissive of NYT efforts against OpenAI. This article persuasively argues the other side. The most obvious issue is AI models simply regurgitating the original text on which the models are trained. This of course isn’t a good look for someone trying to convince a judge they are not violating copyright laws.

We are still in the embryonic stages of AI. I still believe owners of proprietary data are well positioned, but time will tell.

#3 – Todd Moyer, President and Chief Operating Officer, Confluence published in Traders Magazine Three Trends for 2024: Unstructured Data vs. Rising Regulation. February 2024.

My Take: Spoiler alert, here are the three trends Todd predicts will have the most impact on our industry.

Prediction 1: As rising regulation clashes with unstructured data, AI may be the sole solution

Prediction 2: Firms with alternative and/or proprietary data will reap the benefits

Prediction 3: Heightened scrutiny will force private assets to manage risk like public funds

Agree with all three of the predictions … I have been early (AKA wrong?) on prediction #2 for some time now. Let’s hope prop data owners will start reaping in 2024!

BONUS: Matt Ober’s Do Hedge Funds Have Data Budget?. February 2024. “My rule of thumb for most data vendors is to assume if you are selling a new, unique dataset that has a moat and is used by minimal hedge funds, then there is typically going to be interest and an unlimited budget. If you are selling something that is commoditized, something that is hard to work with i.e. data isn’t cleaned, structured or well documented, or you are doing a displacement sale, then its going to be hard and budgets will be tough.”

What else I am reading:

Ethan Bernstein published The Transparency Trap. 2014.

CyberSyn makes daily trading volumes & prices of all US equities and ETFs executed on the NASDAQ available in your SnowFlake instance for free.

Three Data Point Thursday published 3 Actionable Tactics To Create A Good Data Strategy. February 2024.

The Data Score published Assessing the ROI of Data. January 2024.

Alex Boden published Information Asymmetry. February 2024.

Source: Nasdaq published What Companies Need to Consider Regarding Policy, Internal Standards, and Best Practices with Data. February 2024.

Dan Joldzic, CEO of Alexandria Technology

Jason Albert, Global Chief Privacy Officer at ADP

Glenn Kurban, Partner at Capco

Mike Pappacena, Partner at ACA Group

Jill Malandrino on Nasdaq TradeTalks (Host)

Round table conversation discussing what data governance is, why it matters, and what companies need to consider regarding policy, internal standards, and best practices.

My Take: How do you get trust without transparency? GenAI by nature doesn’t offer much transparency. As a result, data quality and governance is of paramount importance. There is a need to be able to audit data sources for PII, MNPI, usage rights, etc.

The discussion points towards firms moving from centralized to more of a federated concept … a central group might set policy & direction, but individual groups determine how they use and engage with the data.

AI will amplify bad data, so there was universal agreement on the importance of doubling-down on the basics of data governance.

Highlights (20-minute run time):

Minute 00:45 – Dan Joldzic from Alexandria talks data governance

Minute 01:45 – Jason Albert from ADP talks data governance

Minute 02:30 – Glenn Kurban of Capco talks data governance

Minute 03:30 – Mike Pappacena of ACA Group talks data governance

Minute 04:45 – what does data governance look like, “centralized” vs “federated”

Minute 07:10 – importance of understanding your data in detail &what is important to your firm

Minute 09:40 – risk controls with GenAI & AI Tools

Minute 12:00 – inherent lack of transparency with GenAI (make it more important to know what data is going in)

Minute 15:30 – predictions for 5-10 years from now

Minute 18:30 – opportunity to leverage GenAI tools

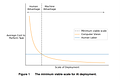

Source: MIT Sloan published Automation may be possible — but when will businesses want to do it?. February 2024.

My take: AI adoption will be impactful, but likely more gradual than hoped/feared. Amara’s Law in full effect.

Researchers Neil Thompson, Maja S. Svanberg, and Wensu Li of MIT; Martin Fleming of The Productivity Institute; and Brian Goehring of IBM.

The paper addresses “three important shortcomings of AI exposure models to construct a more economically-grounded estimate of task automation.

First, we survey workers familiar with end-use tasks to understand what performance would be required of an automated system.

Second, we model the cost of building AI systems capable of reaching that level of performance. This cost estimate is essential to understanding the deployment of AI, since technically-exacting systems can be enormously expensive.

And third, we model the decision about whether AI adoption is economically-attractive. The result is the first end-to-end AI automation model.”

Page 4

Source: