Alternative Data Weekly #277

Theme: where does value flow when all data can be accessed by anyone?

Special thanks to our sponsor Sequentum.

Sequentum’s latest AI-enabled tooling, now including MCP, helps move data workflows from probabilistic outputs to deterministic, auditable pipelines – Sign up for your FREE TRIAL of Sequentum Cloud.

QUOTES

“The interface moat is dead. What remains is data. And if your data isn’t proprietary, neither is your business.” – Nicolas Bustamante, The Crumbling Workflow Moat: Aggregation Theory’s Final Chapter

“Customers are frustrated, workflows are inefficient, pricing is opaque, access is gated, rights are unclear, and integration is painful. Innovation doesn’t create that demand, it reveals it and removes friction.” - Dan Entrup, Are You a Software Company or a Data Company?

News

Pods

Charts

Final Thoughts (Jevons Paradox for AI)

#1 – Carbon Arc published Convergence: The Great Tokenization. January 2026.

My Take: This paper does a great job explaining why data markets need to change & how they are going to change.

The key idea is that two very different meanings of “tokenization” are converging. AI tokenizes information so machines can reason. Financial systems tokenize value so assets can clear and settle.

Autonomous agents want verified, structured, entity-centric data, priced on consumption & settled in real time. Traditional subscriptions and static datasets don’t fit into this consumption model. If agents are going to transact, something needs to change.

Carbon Arc is building the market infrastructure for data (i.e., standardization, compliance, settlement).

This is a very thoughtful articulation about where data markets and infrastructure are heading.

#2 – Ravenpack published The State of AI in Finance 2026. January 2026.

My Take: The 42-page report brings together a series of shorter perspectives from a wide range of people, from Cambridge professor Mark Salmon to Petter Kolm of NYU’s Courant Institute.

Workflows are going to be key. When everyone can easily access all the same data and information, who wins? Those implementing the smartest workflows and those asking the smartest questions.

To launch agentic systems that act on their own…there is going to need to be strict workflows in place, many of which are stuck in the brains of the highest value employees. Once data is a commodity, it will be the institutional knowledge (best workflows) & domain expertise (the best questions) that wins.

#3 – Travis May published Moats Matter Again. February 2026.

My Take: Who is going to win? Where is value going to flow? “’Data currencies’ and ‘data marketplaces’ have relatively strong network effects, but good curation and dashboards don’t. As a result, some companies are much more subject to disruption from AI-native approaches.

We won’t need visualization tools (BI, dashboards, etc) as each person will create their own in the age of AI. So what then are the “strong intrinsic network effects”? Software whose moat was high switching costs are seeing that benefit disappear.

It must be proprietary data and will increasingly be the workflow you put around that data.

BONUS: Nicolas Bustamante published The Crumbling Workflow Moat: Aggregation Theory’s Final Chapter. February 2026. “The user never touched any specialized interface. They don’t know (or care) which data provider the LLM queried. The LLM found the cheapest available source with adequate coverage.”

What else I am reading:

Derek Robertson and Matthew Kosinski published Observability trends 2026. January 2026.

Tina He published The Boring Businesses That Will Dominate the AI Era. January 2026.

Sean Michael Kerner published The trust paradox killing AI at scale: 76% of data leaders can’t govern what employees already use. January 2026.

Austin Parker published It’s The End Of Observability As We Know It (And I Feel Fine). June 2025.

Source: Mark Fleming Williams published The Evan Reich Episode. February 2026.

My Take: Great discussion between veterans of the Alt Data space.

There is a lot of good stuff in here, but one thing that resonated with me is the idea that AI is going to eliminate the need for the drudgery jobs. Which on first glance, is positive, as the real value comes from human thought, deliberation, and choices. But how do we develop the wisdom to make good choices without the years of drudgery? The answer is to become a student of whatever it is you are doing. Dive into the history. Use the wonderful tools at your disposal to become a student of your vocation. I love this sentiment.

What has changed over the years? The quality of data is better, tools are better, data resides in different places, and people are generally much more comfortable with data & data vendors. But this leads to caution about being “careful about what is going into the pot” (i.e. data sources). You need to know the details about what data is going into your decision to know what might go wrong. Understand “the why” of it all.

Importance of being a “people person” in this space. This is less replaceable by AI.

High-performing data teams should not make full use of the catalog … they should be finding their own sources. The struggle is finding the right, new data source. Takes a ton of time and is totally worth it.

Price is a discussion. Lowest price is not always best when thinking about the entire relationship (quality, service, etc).

HIGHLIGHTS (59-Minute Run Time)

Minute 03:30 – interview starts & Evan’s background (wearing a lot of hats).

Minute 06:15 – how had the data sourcing role changed over time?

Minute 13:00 – hiring for the data sourcing role.

Minute 18:00 – is data sourcing a strategic risk, given what they know about the workings of a firm?

Minute 20:00 – where is the data coming from?

Minute 26:00 – new assets being traded because of access to alt data?

Minute 29:00 – how to find new data sources? The challenge of finding the right person at big companies.

Minute 37:00 – price discussion.

Minute 41:00 – the decreasing value of processing public data.

Minute 44:00 – data from different geographies (China, Europe).

Minute 48:00 – roles at risk from AI (data engineering).

Minute 53:00 – Evan’s new role (BWG).

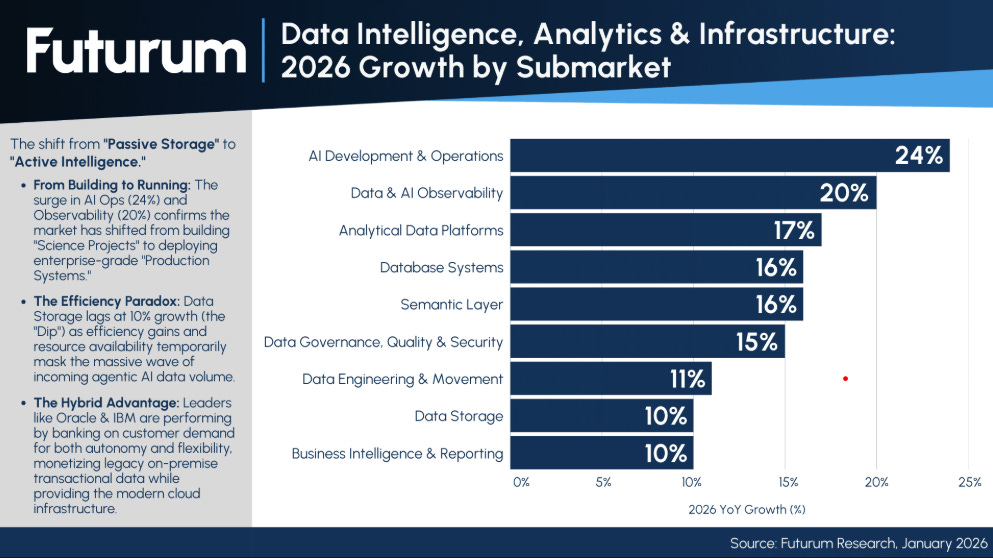

SOURCE 1: Brad Shimmin of Futurum published $1.2T Data Market by 2031: Agentic AI Replaces Data Pipelines. January 2026.

Figure 1: Data Intelligence, Analytics, and Infrastructure – 2026 Growth by Submarket.

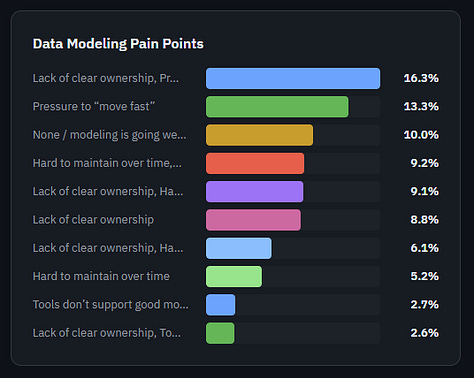

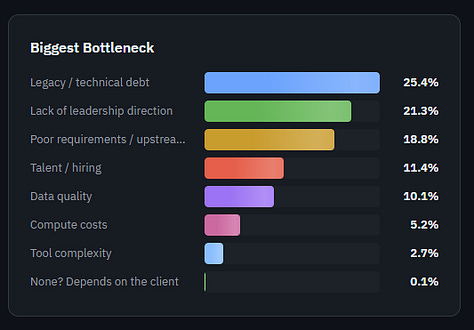

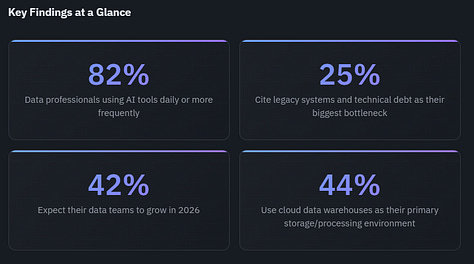

SOURCE 2: Joe Reis published The 2026 State of Data Engineering Survey (Interactive). February 2026.

Cool interactive display here.

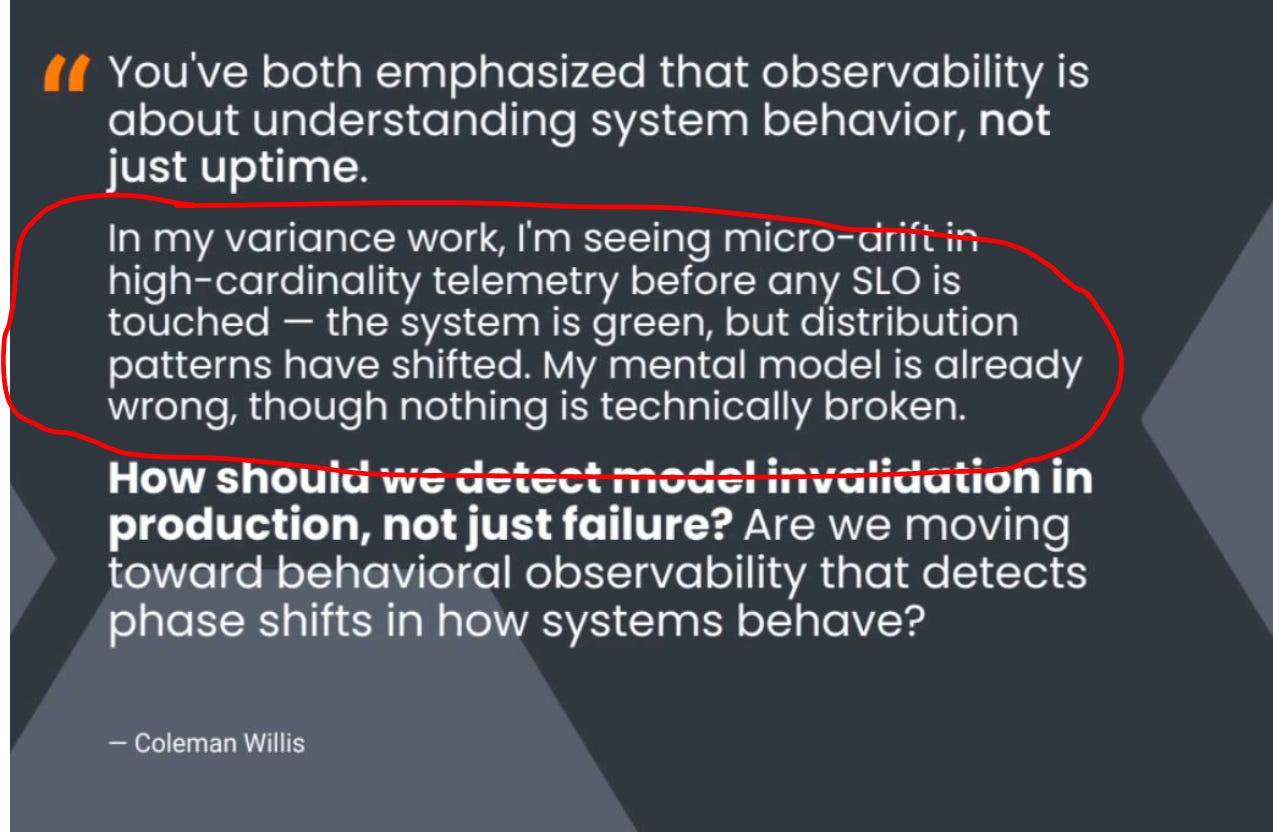

SOURCE 3: Christine Yen & Charity Majors shared The Next Era of Observability: Founders’ Reflections. February 2026.

“Join Honeycomb Co-founders, Christine Yen and Charity Majors, as they revisit their most controversial takes from 2016.”

This quote below struck me as interesting as this is what we are addressing at SymetryML. This type of early detection.

I’ve seen more references to Jevons Paradox in recent weeks than I have in years. I am drawn to this concept because it starts with an abundant mindset.

Jevons Paradox is an economic theory stating that as technology increases the efficiency with which a resource is used, the total consumption of that resource increases rather than decreases.

As far back as June 2023, I’ve been seeing the parallels between what is happening with AI and the concept of Jevon’s Paradox (see here & here). I frankly have struggled to articulate these thoughts and am impressed with how well some of these visionary thinkers tell us how it will work.

Making it easier to be more productive will lead to far more things to be done and far more jobs than there are humans to do the jobs. There will be disruptions along the way, but this will be the trend over the next decade.

Ambitious people who work 60+ hours a week will not start working 30 hours a week with better tools. They will create more.

Joe Reis: The Jevons Paradox of Software and the Ten Minute App

“As it becomes trivially easy to build, we don’t build less. We build dramatically more. Every problem that was previously ‘not worth writing software for’ suddenly is.”

“It’s less clear that it replaces the connective tissue that holds complex organizations together.”

In my opinion the “connective tissue” moves from the systems of record to the systems of work (workflow). Everyone will try to emulate the highest productive workflow. It will be far more than just that one guy in the office who knows how to use the Bloomberg terminal better than everyone else.

Greg Rosalsky of NPR published Why the AI world is suddenly obsessed with a 160-year-old economics paradox.

“…as AI makes certain occupations more efficient, it could lead to an increase in demand for human labor, not mass layoffs.”

Stefanija Tenekedjieva Haans published The Jevons Paradox and its implications in the AI era

“The Jevons Paradox is evident here: the more efficient and accessible SaaS products become, the more companies adopt them, creating an expanded demand for custom development.”

Prateek Sharma published The Jevons Paradox In Cloud Computing: A Thermodynamics Perspective

Despite massive efficiency gains in hyperscale clouds, energy use keeps rising because efficiency fuels growth (Jevons’ Paradox holds in cloud computing).

Alexandra Sasha Luccioni From Efficiency Gains to Rebound Effects: The Problem of Jevons’ Paradox in AI’s Polarized Environmental Debate

Making AI more energy efficient will result in more AI-related energy being consumed.